Evaluation

Understanding our impact and supporting continual learning and improvement is essential to ensure we target resources effectively. Our evaluation team works to ensure that what we do is evidence-based.

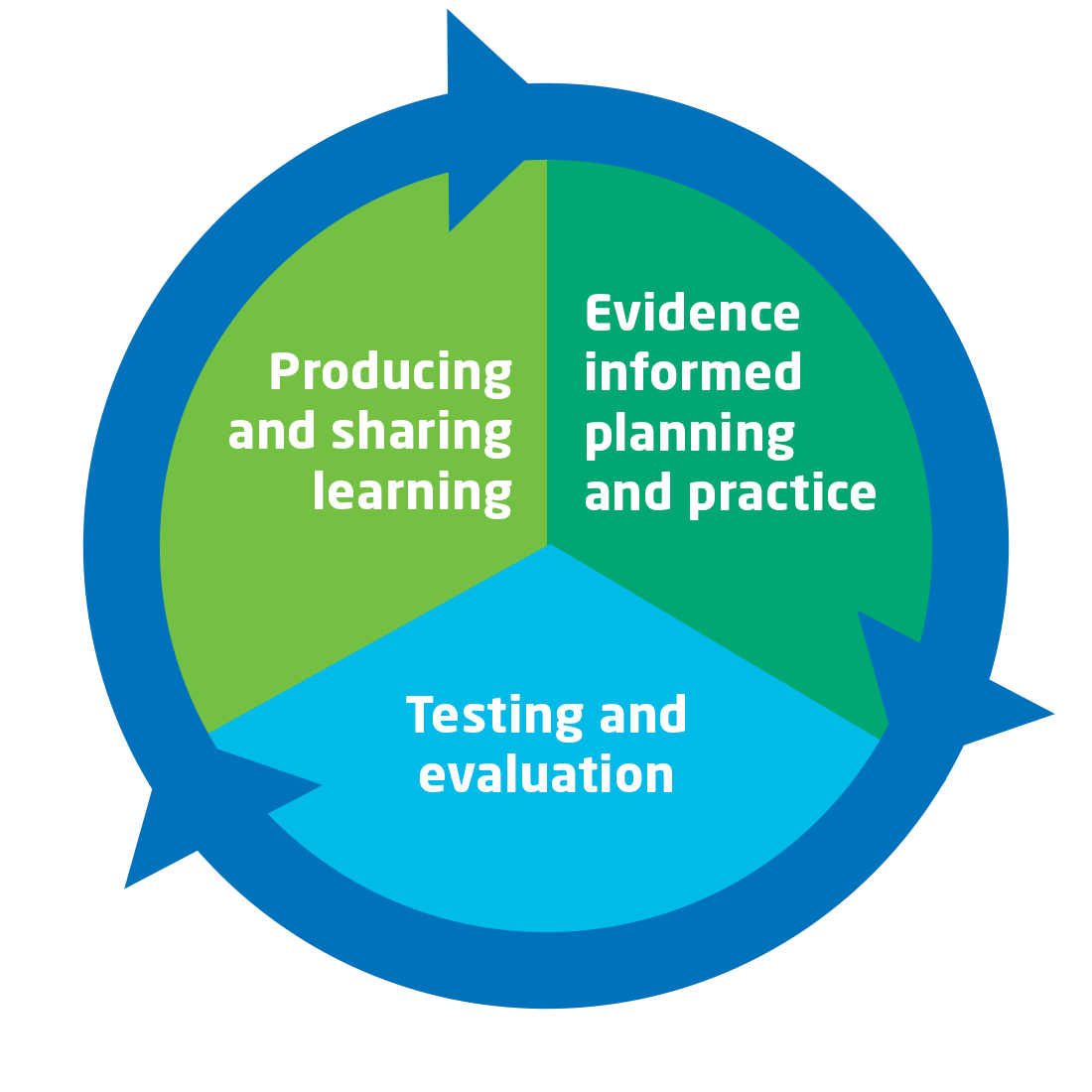

That work covers 3 different strands:

Evidence informed planning and practice

Our dedicated evaluation team reviews and collates evidence from around the world to feed into our engineering and technology outreach delivery and decision making. Drawing on our own evaluations and those conducted by other organisations, we learn from the best published research and identify gaps in knowledge where we may be able to test new approaches.

We're currently working on the first in a series of 'what works' reviews, looking at what works to build engineering and technology career aspirations in girls. The review will be shared on Tomorrow's Engineers.

Testing and evaluation

We want our work with young people to make a difference, so we evaluate our outreach programmes to understand what is working, make changes and improvements and demonstrate impact.

We share the results to add to the collective understanding of what works well and where we can improve. You can access the headlines and for each of our programmes below.

Producing and sharing learning

To support the wider community we share our learning on evaluation methods and on what works in STEM outreach through a range of resources and activities. Our tools and methodology are available on the Tomorrow’s Engineers website, we hope other organisations will find them useful as they evaluate their work.

These resources include guides to designing survey and conducting evaluations with young people, an interactive measures bank with sample questions and our impact framework for engineering outreach as well as webinars and tutorials on how to get the most out of them.

We used a combination of surveys and interviews with students and teachers to evaluate The Big Bang Competition. We explored students’ motivations for participating and their experience of the programme.

We evaluate The Big Bang Fair through surveys gathered at the event. Over 2,300 students and over 300 teachers completed a survey about their experience of The Fair (2022) and the benefits they feel they gained from attending.

We surveyed over 1,100 students and over 50 teachers taking part in a Big Bang at School event. We explore their experiences and perceived benefits of taking part and look at which groups of students felt most engaged with the programme.

Energy Quest is part way through a 3-year external evaluation, exploring changes in the impact students perceive across three iterations of the content. Alongside this external evaluation, we conducted a smaller pre-post evaluation to look at changes in key outcomes for students. We look at matched data for 174 students across three schools.

This evaluation drew on surveys with over 400 students and 68 teachers taking part in the Robotics Challenge heats. We explore which students take part in the programme, their experiences and the benefits they feel they gained from the programme.

Our bursary schemes operate through several of our programmes (Robotics Challenge, Big Bang at School and Big Bang Fair) as well as supporting access to Neon activities. We evaluated their impact using a mix of monitoring data, surveys and interviews.